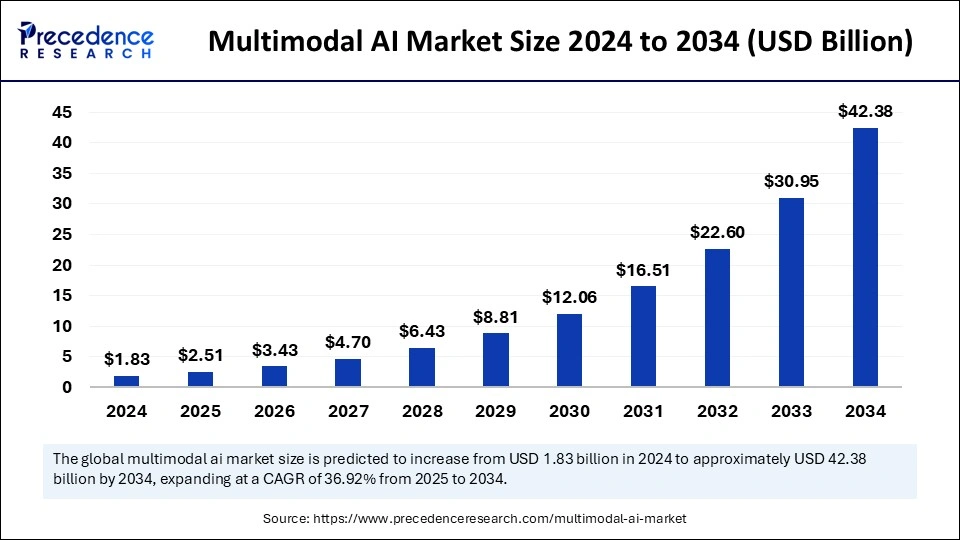

The global multimodal AI market size was estimated at USD 1.83 billion in 2024 and is expected to surpass around USD 42.38 billion by 2034, growing at a CAGR of 36.92% from 2025 to 2034.

Get Sample Copy of Report@ https://www.precedenceresearch.com/sample/5728

Multimodal AI Market Key Points

-

In 2024, North America emerged as the leading region, holding a 48% market share.

-

Asia Pacific is forecasted to grow at the fastest pace during the projection period.

-

The software segment contributed the highest revenue share of 66% in 2024.

-

The services segment is set to expand at a CAGR of 38% throughout the studied period.

-

Among data modalities, text data maintained the largest market presence in 2024.

-

Speech & voice data is projected to be the fastest-growing segment in the coming years.

-

The media & entertainment industry dominated the end-use segment in 2024.

-

BFSI is poised for rapid growth over the forecasted period.

-

Large enterprises led the market in 2024, holding the largest market share.

-

SMEs are expected to gain considerable traction in the foreseeable future.

AI Transforming the Multimodal AI Landscape

-

Next-Level Human-Machine Interaction – AI allows machines to process and respond to various sensory inputs simultaneously, improving communication in robotics and virtual assistants.

-

Accelerated Growth of Smart Devices – AI enhances multimodal capabilities in smart home systems, wearables, and IoT devices, making them more adaptive and intelligent.

-

Advanced Autonomous Systems – AI-powered multimodal perception enables self-driving cars, drones, and robotic systems to interpret complex real-world environments more effectively.

-

Real-Time Multimodal Translation – AI-driven language models integrate speech, text, and images for real-time, accurate translations, enhancing cross-language communication.

-

Empowering Augmented and Virtual Reality – AI enhances multimodal AR/VR experiences by integrating visual, auditory, and gesture-based interactions for more immersive digital environments.

Also Read: E-Learning For Pet Services Market

Multimodal AI Market Scope

| Report Coverage | Details |

| Market Size by 2034 | USD 42.38 Billion |

| Market Size in 2025 | USD 2.51 Billion |

| Market Size in 2024 | USD 1.83 Billion |

| Market Growth Rate from 2025 to 2034 | CAGR of 36.92% |

| Dominated Region | North America |

| Fastest Growing Market | Asia Pacific |

| Base Year | 2024 |

| Forecast Period | 2025 to 2034 |

| Segments Covered | Component, Data Modality, End use, Enterprise Size, and Regions |

| Regions Covered | North America, Europe, Asia-Pacific, Latin America and Middle East |

Multimodal AI Market Dynamics

Drivers

The widespread integration of AI into consumer applications, such as virtual assistants, recommendation systems, and smart home devices, is driving the growth of the multimodal AI market. Businesses are increasingly leveraging AI-driven insights to enhance customer experiences and optimize decision-making. The demand for real-time AI interactions, particularly in industries like finance, automotive, and retail, is accelerating the adoption of multimodal AI solutions.

Additionally, advancements in AI-powered computer vision and natural language processing are making it easier to deploy multimodal AI across different platforms.

Opportunities

The rise of multimodal AI in the entertainment and media industries presents new opportunities for content creation and audience engagement. AI-generated media, including interactive storytelling, virtual influencers, and personalized video recommendations, is revolutionizing digital experiences.

The financial sector is also embracing multimodal AI to enhance fraud detection and risk assessment through a combination of text, image, and voice analysis. Furthermore, the growing need for AI-driven accessibility solutions, such as speech-to-text and image-to-audio conversion, provides a promising avenue for market expansion.

Challenges

One of the biggest challenges in the multimodal AI market is the complexity of training and fine-tuning AI models to handle multiple data types simultaneously. Developing robust AI architectures that can process and interpret multimodal inputs with high accuracy remains a technical hurdle. Ethical considerations, including bias in AI algorithms and the potential misuse of AI-generated content, raise concerns among regulators and industry stakeholders.

The requirement for large datasets and extensive computational resources can also be a limiting factor, particularly for smaller companies with limited AI infrastructure.

Regional Analysis

North America dominates the market due to strong investment in AI research and development, particularly in the United States and Canada. The presence of leading tech giants and a high demand for AI-powered applications further solidify its market position. Asia Pacific is emerging as a major growth region, fueled by AI-driven initiatives in China, South Korea, and India.

Governments in the region are heavily investing in AI research and infrastructure to boost innovation. Europe is maintaining steady growth, with a focus on responsible AI development and regulatory compliance, ensuring AI deployment aligns with ethical standards.

Multimodal AI Market Recent Developments

- In December 2024, Google released Gemini 2.0 Flash as its new flagship AI model while updating other AI features and making the Gemini 2.0 Flash Thinking Experimental. The new model is available through Gemini app interfaces to expand its sophisticated AI reasoning capabilities.

- In December 2023, Alphabet Inc. unveiled its highly developed AI model, Gemini. This revolutionary system established a new benchmark by becoming the first to outshine human experts on the widely used Massive Multitask Language Understanding (MMLU) assessment metric.

- In October 2023, Reka launched Yasa-1 as its first multimodal AI assistant, which extends across text, image analysis, short video, and audio inputs. The Yasa-1 solution allows enterprises to modify their capabilities across various modalities of private datasets, resulting in innovative experiences for different use cases.

- In September 2023, Meta announced the launch of its smart glasses with multimodal AI capabilities that are able to gather environmental details through built-in cameras and microphones. Through its Ray-Ban smart glasses, the artificial assistant uses the voice command “Hey Meta,” which allows the assistant to observe and hear the surrounding events.

Multimodal AI Market Companies

- Amazon Web Services, Inc.

- Aimesoft

- Google LLC

- Jina AI GmbH

- IBM Corporation

- Meta.

- Microsoft

- OpenAI, L.L.C.

- Twelve Labs Inc.

- Uniphore Technologies Inc.

Segments Covered in the Report

By Component

- Software

- Services

By Data Modality

- Image Data

- Text Data

- Speech & Voice Data

- Video & Audio Data

By End-use

- Media & Entertainment

- BFSI

- IT & Telecommunication

- Healthcare

- Automotive & Transportation

- Gaming

- Others

By Enterprise Size

- Large Enterprises

- SMEs

By Region

- North America

- Europe

- Asia Pacific

- Latin America

- Middle East and Africa (MEA)

Ready for more? Dive into the full experience on our website@ https://www.precedenceresearch.com/